Top Tools to Evaluate and Benchmark AI Agent Performance in 2026

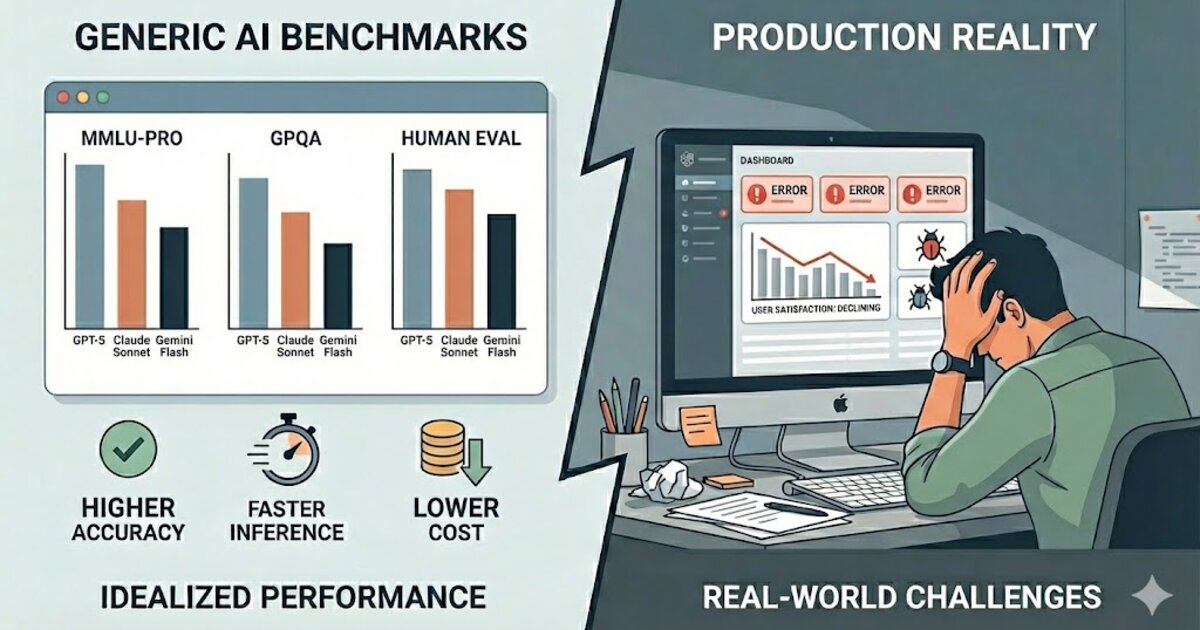

Evaluating AI agents is harder than evaluating LLM outputs. A single agent run involves dozens of decisions (which tools to call, in what order, with what arguments), and failures are compositional. An agent can execute every step correctly and still produce a wrong result because the reasoning connecting those steps was flawed. Standard benchmark metrics borrowed from LLM evaluation don't surface this kind of failure.

The cost of missing it is already visible in production. A customer service agent can achieve 100% tool-call accuracy while still violating policy on edge cases. A research agent can successfully call every required API and still deliver a summary a domain expert would reject. These aren't fringe scenarios. They're the normal failure mode for agents deployed in domains where correctness is contextual and defined by people, not metrics.

We evaluated seven platforms built for agent evaluation and benchmarking in 2026. Most tools measure how your agent ran. Truesight measures whether it worked.

Comparison at a glance

| Rank | Tool | Best For | Agent Eval Approach | Framework Support | Starting Price |

|---|---|---|---|---|---|

| 1 | Truesight | Domain-expert outcome scoring | Expert-defined pass/fail criteria, live eval API | Any (API-based) | $19/mo |

| 2 | W&B Weave | Production-scale tracing + local scorers | Local SLM scorers, step-level tracing | LangChain, LlamaIndex, custom | $60/mo |

| 3 | Braintrust | CI/CD-integrated agent testing | Loop AI automation, 8 RAG metrics, GitHub Actions | LangChain, OpenAI Agents, custom | $249/mo |

| 4 | Arize Phoenix | OTel-native agent observability | Dedicated agent evaluators, embedding visualization | 15+ via OTel | Free / $50/mo |

| 5 | LangSmith | Multi-turn LangGraph evaluation | Multi-turn tracing, step-level scoring, Polly AI | LangChain, LangGraph | $39/seat/mo |

| 6 | Comet Opik | High-volume traces + Agent Optimizer | 6-algorithm Agent Optimizer, 40M trace/day | LangChain, OpenAI, custom | $19/mo |

| 7 | DeepEval | Deterministic DAG metric evaluation | DAG metric for agent paths, 6 agent-specific metrics | Python-first, custom | Free / $19.99/user/mo |

What benchmarking AI agent performance actually requires

Agent evaluation has two distinct halves, and most platforms have only solved one of them.

Step-level tracing is the solved half: tool-call accuracy, trajectory analysis, loop detection, latency per step, input/output logging at each node. All seven platforms on this list handle this reasonably well. It tells you how the agent executed.

Outcome scoring is the unsolved half: did the agent accomplish the goal in a way a domain expert would approve? This can't be answered by replaying the execution trace. It requires someone who knows what "success" means in context (a clinician, a compliance officer, an educator, a product manager) to evaluate the final output against criteria they define. Most platforms leave this to custom scorer code, which means engineers translate domain knowledge into metrics, introducing noise and misalignment at the point that matters most.

Platform breakdowns

1. Truesight

Domain experts define what a successful agent run looks like, in plain language through a no-code interface, and those criteria get deployed as live evaluation endpoints scoring every run. The platform integrates into production pipelines via API, so agent outputs are scored continuously rather than in offline test harnesses. That distinction matters for teams shipping agents into regulated domains where "technically executed correctly" and "actually worked" aren't the same thing.

- Expert-defined evaluation criteria, no code required

- Live API endpoints score agent outputs in production pipelines

- Human review queue with frozen config snapshots for audit provenance

- Multi-model judge support: OpenAI, Anthropic, Google, any LiteLLM provider

- Systematic error analysis to surface where agents consistently fail

Best for: Teams evaluating agents in domains where domain experts, not engineers, define what "success" looks like.

2. Weights & Biases Weave

Now part of CoreWeave following a 2025 acquisition, Weave offers production-scale agent tracing with local SLM scorers that run entirely within the customer's environment. Framework support is broad and the compliance certification set is the widest on this list. The product roadmap has some uncertainty following the CoreWeave acquisition, worth tracking for long-term commitments.

- Local SLM scorers: evaluation runs in-environment, no data leaves

- Broadest compliance certifications: SOC 2, ISO 27001/17/18, HIPAA, NIST 800-53

- Dedicated single-tenant cloud across AWS, GCP, Azure

- Step-level tracing with multi-turn agent support

- Widely adopted across enterprise and research teams

Best for: Organizations where compliance certification breadth and local execution of scorers are both requirements.

3. Braintrust

A strong option for teams with CI/CD evaluation pipelines. The Loop AI feature automates experiment cycles by running evals, analyzing failures, and generating improved prompts without manual intervention. GitHub Actions integration means agent evaluation can gate deployments. Data plane runs in the customer VPC on all paid tiers.

- Loop AI: automated evaluation-improvement cycles

- GitHub Actions integration for deployment-gated evaluation

- Customer-VPC data plane on all paid plans

- 8 RAG-specific metrics plus custom scorer support

- Notable customers: Stripe, Notion, Instacart, Dropbox

Best for: Teams that want automated evaluation-improvement loops integrated into their deployment pipeline.

4. Arize Phoenix

The only fully OTel-native platform on this list. Instrumentation is portable and vendor-agnostic by default. Includes dedicated agent evaluators out of the box, embedding visualization for debugging retrieval steps, and a free self-hosted tier with no feature restrictions.

- OpenTelemetry-native: no proprietary tracing layer

- Dedicated agent evaluators for tool-use and multi-step evaluation

- Free self-hosting with no feature gates (Docker, K8s, AWS CloudFormation)

- SOC 2 Type II, HIPAA, GDPR with US/EU/CA data residency

- $70M Series C (Feb 2025); enterprise customers include Uber, Booking.com

Best for: Teams requiring portable, OTel-native instrumentation with zero vendor lock-in and free self-hosting.

5. LangSmith

The dominant choice for LangChain and LangGraph teams. Multi-turn agent evaluation is a first-class feature, with step-level scoring built specifically around LangGraph's node/edge structure. Reached unicorn valuation ($1.25B) in late 2025. Works best when you're already in the LangChain ecosystem.

- Multi-turn agent evaluation with step-level scoring

- LangGraph-native: node/edge tracing built in

- Three deployment modes: cloud SaaS, hybrid BYOC, fully self-hosted

- 400-day extended trace retention for compliance evidence

- HIPAA, SOC 2 Type 2, GDPR with SSO/SAML and SCIM provisioning

Best for: Organizations running LangChain or LangGraph that need multi-turn agent evaluation with F500-grade deployment options.

6. Comet Opik

The Agent Optimizer is the differentiating feature: six optimization algorithms that analyze evaluation results and propose prompt and config improvements automatically. At 40M traces/day capacity and Apache 2.0 licensing, it targets high-volume production workloads where cost and throughput matter.

- Agent Optimizer: 6 algorithms for automated prompt/config improvement

- 40M trace/day capacity for high-volume agent workloads

- Apache 2.0 license with no managed-service restrictions

- $19/mo Pro tier with unlimited team members

- SOC 2, ISO 27001, HIPAA compliance

Best for: Teams running high-volume agent workloads who want automated optimization loops at the lowest price point.

7. DeepEval by Confident AI

The deterministic DAG metric is the standout for agent evaluation. It maps evaluation criteria directly to the agent's execution graph rather than scoring outputs in isolation. 50+ built-in metrics including 6 agent-specific ones. Python-only, YC W25 company with a shorter enterprise track record than the others.

- DAG metric: evaluation criteria mapped to agent execution graph

- 6 agent-specific metrics plus 50+ total built-in metrics

- Native Pytest integration for CI/CD evaluation pipelines

- On-prem enterprise deployment on AWS, Azure, GCP

- SOC 2, HIPAA on higher tiers

Best for: Python-first teams that need deterministic, graph-aware agent evaluation with broad off-the-shelf metric coverage.

How to choose

- Choose Truesight when domain experts (not engineers) need to define what agent success looks like, and evaluation needs to run continuously in production.

- Choose W&B Weave when compliance certifications and local SLM execution are both hard requirements.

- Choose Braintrust when automated evaluation-improvement loops integrated into CI/CD are the priority.

- Choose Arize Phoenix when OTel portability and free self-hosting with no feature gates are non-negotiable.

- Choose LangSmith when you're running LangChain/LangGraph and need multi-turn evaluation with enterprise deployment options.

- Choose Comet Opik when throughput and price matter most and you want permissive open-source licensing.

- Choose DeepEval when deterministic graph-aware evaluation and broad metric coverage matter for a Python-first team.

Agent evaluation that scores outcomes, not just execution.

Truesight lets domain experts define what a successful agent run looks like and deploys those criteria as live evaluation endpoints. No coding required.

Disclosure: Truesight is built by Goodeye Labs, the publisher of this article. We have aimed to provide a fair and accurate comparison based on each platform's documented capabilities as of March 2026.

Tags

Related Posts

Dr. Randal S. Olson

AI Researcher & Builder · Co-Founder & CTO at Goodeye Labs

I turn ambitious AI ideas into business wins, bridging the gap between technical promise and real-world impact.