Statistical analysis made easy in Python with SciPy and pandas DataFrames

I finally got around to finishing up this tutorial on how to use pandas DataFrames and SciPy together to handle any and all of your statistical needs in Python. This is basically an amalgamation of my two previous blog posts on pandas and SciPy.

This is all coded up in an IPython Notebook, so if you want to try things out for yourself, everything you need is available on github: https://github.com/briandconnelly/BEACONToolkit/tree/master/analysis/scripts

Statistical Analysis in Python

In this section, we introduce a few useful methods for analyzing your data in Python. Namely, we cover how to compute the mean, variance, and standard error of a data set. For more advanced statistical analysis, we cover how to perform a Mann-Whitney-Wilcoxon (MWW) RankSum test, how to perform an Analysis of variance (ANOVA) between multiple data sets, and how to compute bootstrapped 95% confidence intervals for non-normally distributed data sets.

Python's SciPy Module

The majority of data analysis in Python can be performed with the SciPy module. SciPy provides a plethora of statistical functions and tests that will handle the majority of your analytical needs. If we don't cover a statistical function or test that you require for your research, SciPy's full statistical library is described in detail at: http://docs.scipy.org/doc/scipy/reference/tutorial/stats.html

Python's pandas Module

The pandas module provides powerful, efficient, R-like DataFrame objects capable of calculating statistics en masse on the entire DataFrame. DataFrames are useful for when you need to compute statistics over multiple replicate runs.

For the purposes of this tutorial, we will use Luis Zaman's digital parasite data set:

Accessing data in pandas DataFrames

You can directly access any column and row by indexing the DataFrame.

You can also access all of the values in a column meeting a certain criteria.

Blank/omitted data (NA or NaN) in pandas DataFrames

Blank/omitted data is a piece of cake to handle in pandas. Here's an example data set with NA/NaN values.

DataFrame methods automatically ignore NA/NaN values.

However, not all methods in Python are guaranteed to handle NA/NaN values properly.

Thus, it behooves you to take care of the NA/NaN values before performing your analysis. You can either:

(1) filter out all of the entries with NA/NaN

If you only care about NA/NaN values in a specific column, you can specify the column name first.

(2) replace all of the NA/NaN entries with a valid value

Take care when deciding what to do with NA/NaN entries. It can have a significant impact on your results!

Mean of a data set

The mean performance of an experiment gives a good idea of how the experiment will turn out on average under a given treatment.

Conveniently, DataFrames have all kinds of built-in functions to perform standard operations on them en masse: `add()`, `sub()`, `mul()`, `div()`, `mean()`, `std()`, etc. The full list is located at: http://pandas.pydata.org/pandas-docs/stable/api.html#computations-descriptive-stats

Thus, computing the mean of a DataFrame only takes one line of code:

Variance in a data set

The variance in the performance provides a measurement of how consistent the results of an experiment are. The lower the variance, the more consistent the results are, and vice versa.

Computing the variance is also built in to pandas DataFrames:

Standard Error of the Mean (SEM)

Combined with the mean, the SEM enables you to establish a range around a mean that the majority of any future replicate experiments will most likely fall within.

pandas DataFrames don't have methods like SEM built in, but since DataFrame rows/columns are treated as lists, you can use any NumPy/SciPy method you like on them.

A single SEM will usually envelop 68% of the possible replicate means and two SEMs envelop 95% of the possible replicate means. Two SEMs are called the "estimated 95% confidence interval." The confidence interval is estimated because the exact width depend on how many replicates you have; this approximation is good when you have more than 20 replicates.

Mann-Whitney-Wilcoxon (MWW) RankSum test

The MWW RankSum test is a useful test to determine if two distributions are significantly different or not. Unlike the t-test, the RankSum test does not assume that the data are normally distributed, potentially providing a more accurate assessment of the data sets.

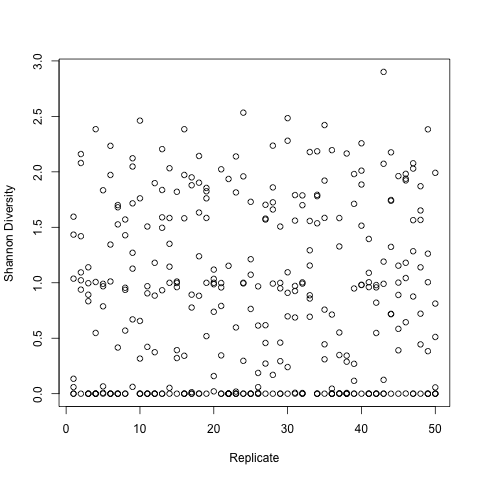

As an example, let's say we want to determine if the results of the two following treatments significantly differ or not:

A RankSum test will provide a P value indicating whether or not the two distributions are the same.

If P <= 0.05, we are highly confident that the distributions significantly differ, and can claim that the treatments had a significant impact on the measured value.

If the treatments do not significantly differ, we could expect a result such as the following:

With P > 0.05, we must say that the distributions do not significantly differ. Thus changing the parasite virulence between 0.8 and 0.9 does not result in a significant change in Shannon Diversity.

One-way analysis of variance (ANOVA)

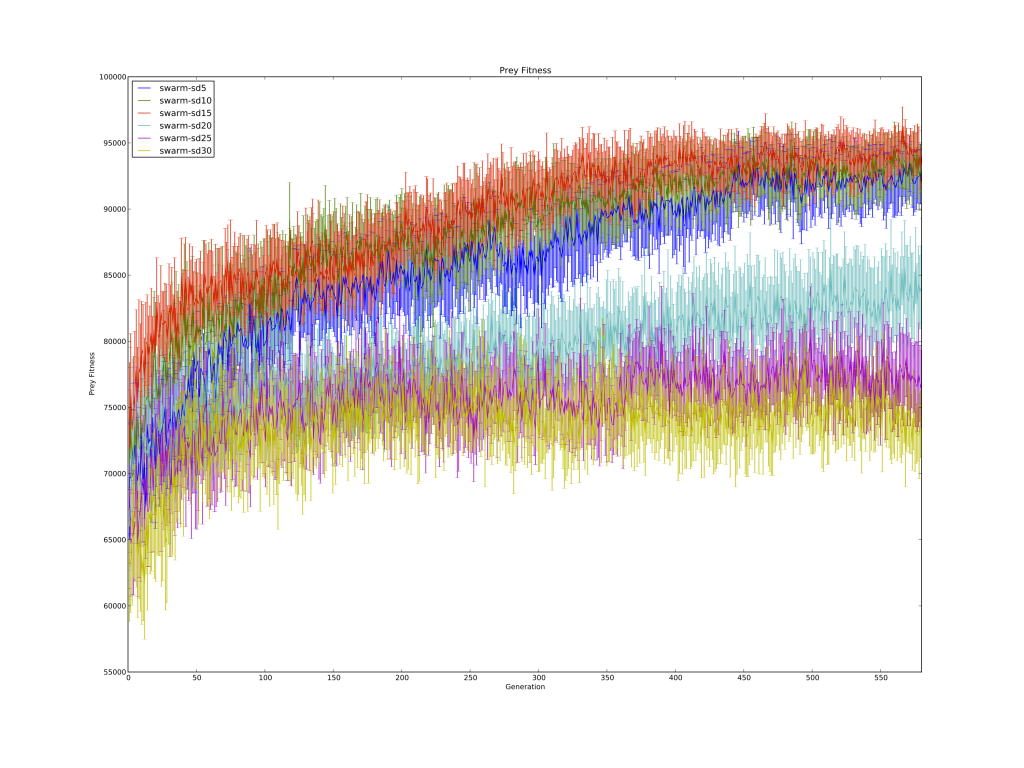

If you need to compare more than two data sets at a time, an ANOVA is your best bet. For example, we have the results from three experiments with overlapping 95% confidence intervals, and we want to confirm that the results for all three experiments are not significantly different.

If P > 0.05, we can claim with high confidence that the means of the results of all three experiments are not significantly different.

Bootstrapped 95% confidence intervals

Oftentimes in wet lab research, it's difficult to perform the 20 replicate runs recommended for computing reliable confidence intervals with SEM.

In this case, bootstrapping the confidence intervals is a much more accurate method of determining the 95% confidence interval around your experiment's mean performance.

Unfortunately, SciPy doesn't have bootstrapping built into its standard library yet. However, there is already a scikit out there for bootstrapping. Enter the following command to install it:

Bootstrapping 95% confidence intervals around the mean with this function is simple:

Note that you can change the range of the confidence interval by setting the alpha:

And also modify the size of the bootstrapped sample pool that the confidence intervals are taken from:

Generally, bootstrapped 95% confidence intervals provide more accurate confidence intervals than 95% confidence intervals estimated from the SEM.

Dr. Randal S. Olson

AI Researcher & Builder · Co-Founder & CTO at Goodeye Labs

I’ve worked in AI for 15+ years. At Goodeye Labs, we build AI products that point frontier models at the business outcomes a team actually cares about.