The Claude Code leak in four charts: half a million lines, three accidents, forty tools

Part of Teaching an AI Agent to Make Beautiful Charts

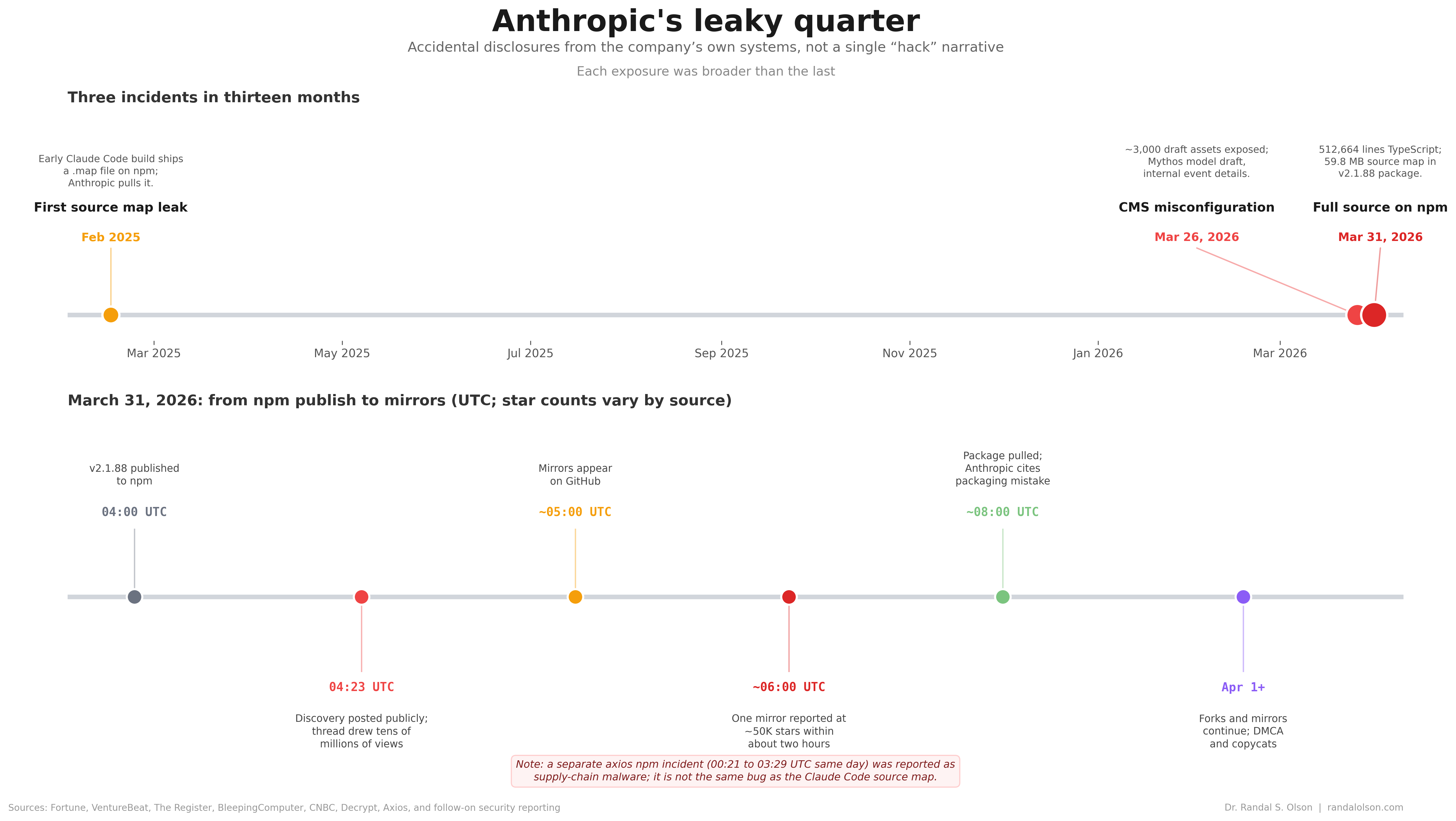

Version 2.1.88 of @anthropic-ai/claude-code hit npm on March 31, 2026 with a production source map still attached. Security researcher Chaofan Shou spotted the exposure; the map pointed at a zip archive on Anthropic’s Cloudflare R2 storage, and decompressing it recovered the full TypeScript tree. Anthropic told reporters the same thing it tells everyone after these slips: packaging mistake, human error, no customer credentials in the bundle. One early GitHub mirror collected over 41,500 forks before the company had time to respond. Mirrors are faster than statements.

I pulled one mirror of 2.1.88, counted 512,664 lines across 1,884 TypeScript and TSX files, and split the snapshot four ways below. None of this is an official Anthropic drop. It is a frozen tarball people can agree on while the fork count climbs.

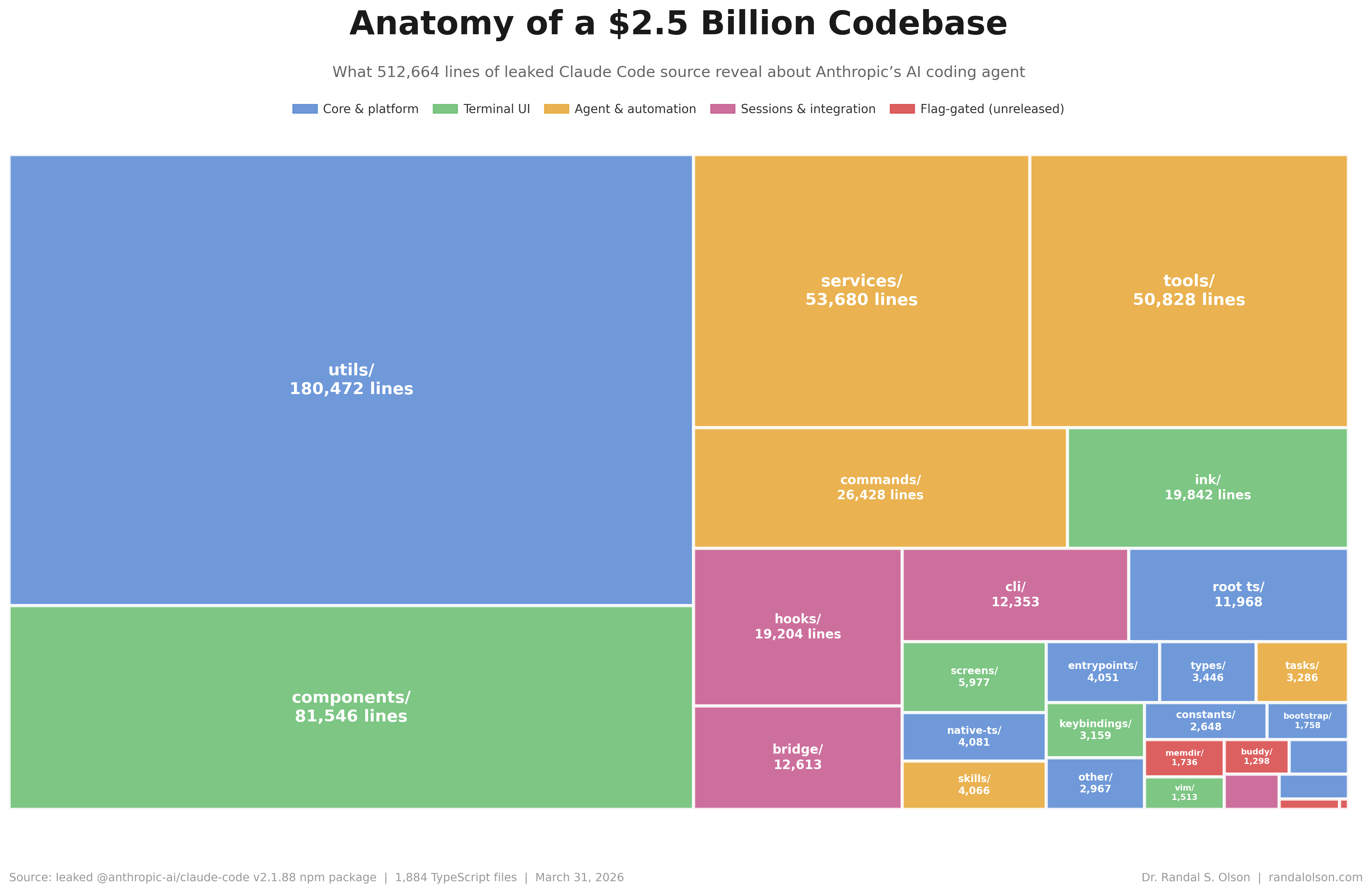

The money is in the plumbing, not the keynote slide

utils/ is roughly 180k lines, a little over a third of the tree. Add components/, services/, tools/, and commands/, and you have the shape of a serious desktop agent: React-style terminal UI (Ink shows up in writeups on the first leak), service glue, and a thick tool layer where the product actually touches the filesystem and shell. hooks/, bridge/, and cli/ pile on another ~44k lines together: session hooks, IDE bridge glue, and the entry wiring that turns “agent in your repo” from a slogan into integrations. The Register pegged the March drop at about 512k lines in ~1,900 files, same ballpark as this count.

The flag-gated directories are where the rumor threads live. In this mirror memdir/ edges out buddy/ on raw line count; coordinator/ and voice/ sit in the low thousands inside a half-million-line tree. Great fuel for speculation, useless for sizing what actually ships.

Three accidents, no villain, escalating stakes

February 2025 was the dress rehearsal. Developer Dave Schumaker opened an early cli.mjs, spotted a fat inline sourceMappingURL, went to a vet appointment, and came back to find Anthropic had already yanked the string in a patch. He recovered the map from an undo buffer in Sublime Text. Eighteen million characters of base64 in one line, npm cache archaeology, undo history as forensics. That is not a hack story. It is a release-engineering mistake that repeated on a bigger stage.

Five days before the npm fire drill, the CMS leaked marketing drafts. Fortune reported that Anthropic acknowledged testing a new model after researchers found a misconfigured content store. InfoWorld walked through draft copy describing a phased rollout aimed first at security teams, plus the odd detail that internal naming still said “Capybara” in places. Different failure mode than npm, same theme: internal material treated as public by default.

March 31 put the CLI on front pages. The Register reported one early GitHub mirror had already been forked more than 41,500 times before the week was out; an uploader later repurposed his repository into a Python feature port over IP liability concerns while forks kept spreading. InfoWorld quoted security researcher Tanya Janca on why this hurts more than a random npm typo: high-value IP means attackers can skip slow reverse engineering and read logic bugs in plain text.

Enterprise SKU, gacha mechanics

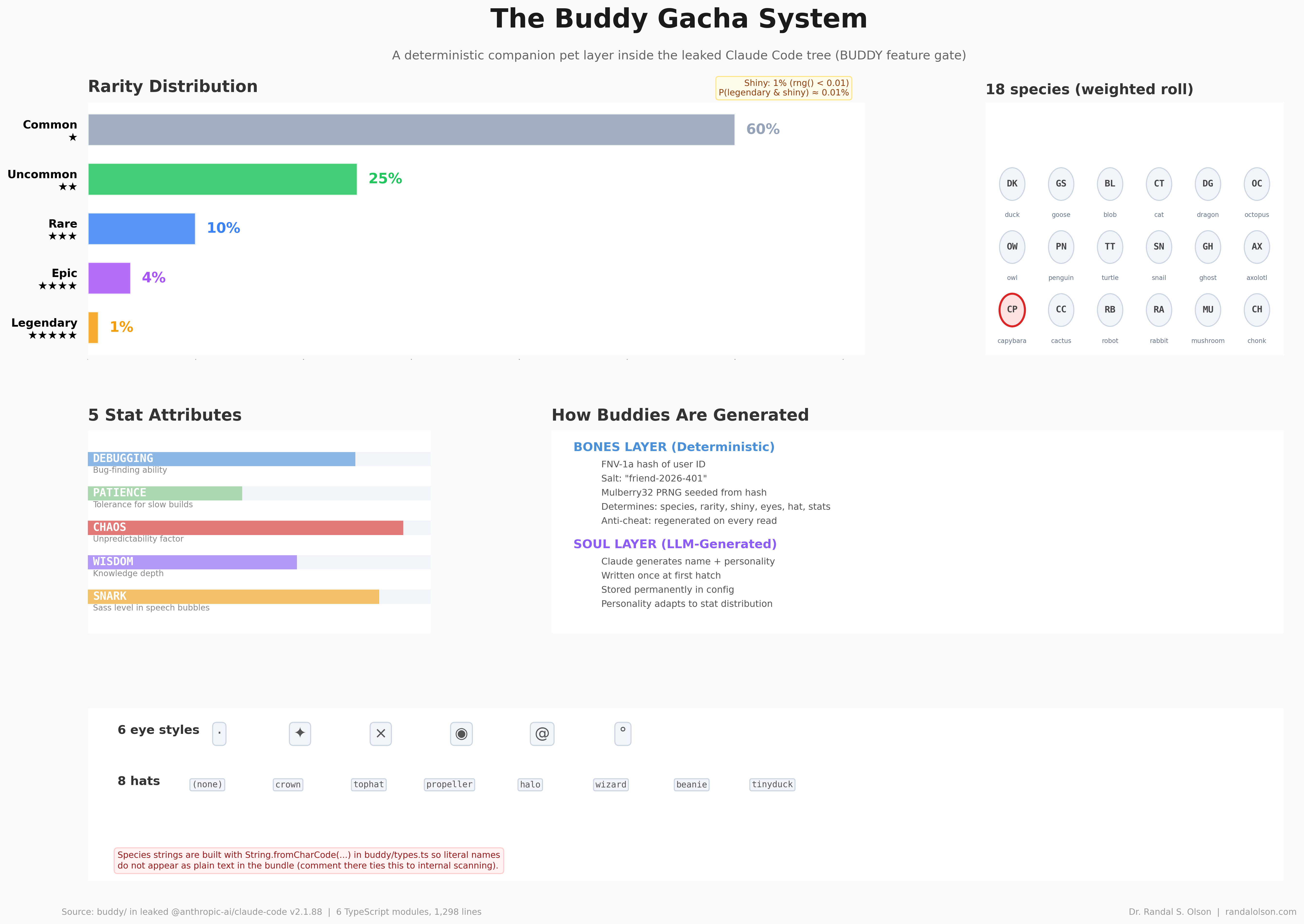

The buddy/ subtree is a weighted collectible game: eighteen species, five rarity tiers, hats, eye glyphs, stats named like inside jokes. The chart mirrors the probabilities baked into buddy/types.ts (60 / 25 / 10 / 4 / 1). It reads like a side project smuggled into a repo that otherwise worries about JWT bridges and task orchestration.

Compile-time gates mean this may never ship as-is, or may ship under another flag. But the rarity weights and stat names are in there now, sitting next to the task orchestrator and the IDE bridge. Some repos have easter eggs. This one has a gacha simulator.

Forty tools, one attack surface narrative

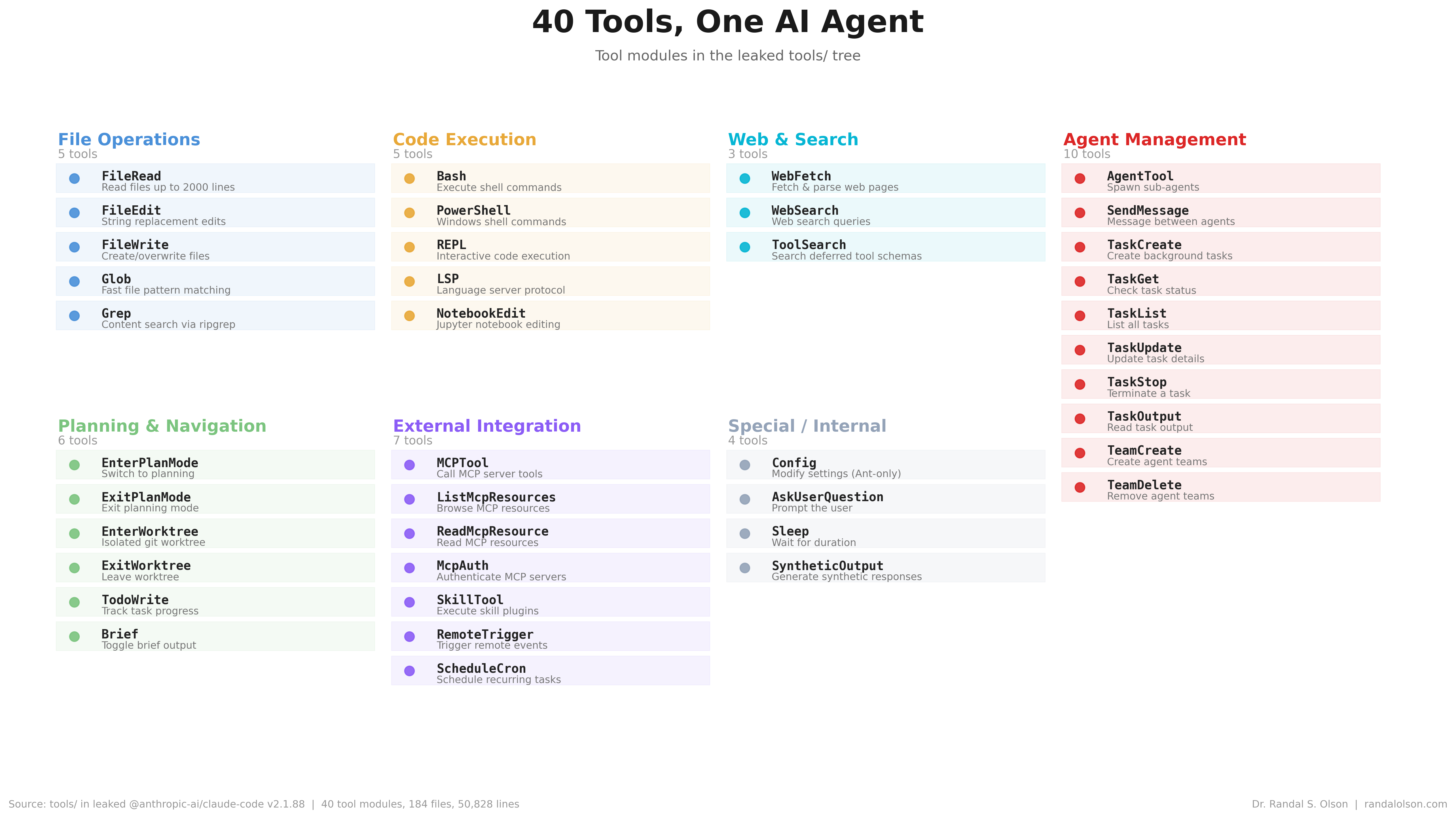

tools/ is about 50.8k lines in 184 files and forty concrete modules. The chart groups them the way the source does: file IO, shells and REPL, web fetch and search, multi-agent task plumbing, plan and worktree modes, MCP and skills hooks, and a small Special / Internal slice (config, user prompts, sleep, synthetic output).

The multi-agent block is worth a second look. Eight tools cover spawning sub-agents, tracking tasks, reading output, and stopping runaway processes. That is not a chatbot with bash access. It is an orchestration platform built to manage parallel agents with independent task contexts. The tool list is also a straightforward capability inventory for anyone trying to understand what “AI agent” means in practice: read, write, execute, search, browse the web, spawn more agents, repeat. If you want to understand what an AI agent can do on your machine, this is the table of contents.

How this chart was made

An AI agent produced these four matplotlib figures as part of the Beautiful Charts with AI series. Each view was refined against the Tufte Test, a data visualization quality standard built by Goodeye Labs on Truesight. The treemap uses squarify; the timeline uses proportional dates on the top axis and a normalized hour strip below; the buddy and tools panels are layout code driven by tables in the mirrored tree.

Data source: aggregated counts from a community mirror of @anthropic-ai/claude-code@2.1.88 (npm publication March 31, 2026). The directory totals table is available here; the tool module listing is available here.

Beautiful Charts with AI

Want to test your own charts against the same quality bar?

Try the Tufte Test on your own chart, or get future updates on AI evaluation and chart quality from Goodeye Labs.

Dr. Randal S. Olson

AI Researcher & Builder · Co-Founder & CTO at Goodeye Labs

I turn ambitious AI ideas into business wins, bridging the gap between technical promise and real-world impact.